本文主要是介绍最新版Ceph( Reef版本)文件存储简单对接k8s(下集),希望对大家解决编程问题提供一定的参考价值,需要的开发者们随着小编来一起学习吧!

假如ceph集群已经创建

1.创建cephfs_pool存储池

ceph osd pool create fs_kube_data 16 162.创建cephfs_metadata存储池

ceph osd pool create fs_kube_metadata 16 163 创建cephfs

ceph fs new cephfs01 fs_kube_metadata fs_kube_data4 设置最大活动数

ceph fs set cephfs01 max_mds 25 创建卷子组(非常重要,reef版的文件存储多一步这个)

ceph fs subvolumegroup create cephfs01 myfsg

创建k8s访问cephfs的认证用户

#ceph auth get-or-create client.cephfs01 mon 'allow r' mds 'allow rw' osd 'allow rw pool=cephfs_data, allow rw pool=cephfs_metadata'# ceph auth get client.cephfs01

[client.cephfs01]key = AQAHRD9mmCOLCBAAb+gJ3WBM/KU/FbZEofGOJg==caps mds = "allow rw"caps mon = "allow r"caps osd = "allow rw pool=cephfs_data, allow rw pool=cephfs_metadata"#目前这个版本需要手动创建 # ceph auth get client.cephfs01 > /etc/ceph/ceph.client.cephfs01.keyring本地测试挂载并创建目录

#目前这个版本需要手动创建 # ceph auth get client.cephfs01 > /etc/ceph/ceph.client.cephfs01.keyring# mount.ceph ceph-163:6789:/ /mnt -o name=cephfs01,secret=AQAHRD9mmCOLCBAAb+gJ3WBM/KU/FbZEofGOJg==#挂着成功

# df -h | grep mnt

127.0.1.1:6789,192.168.0.163:6789:/ 222G 0 222G 0% /mnt

在写你的外部config配置,如果不想使用,就不用写

cat <<EOF > config.yaml

apiVersion: v1

kind: ConfigMap

data:config.json: |-[{"clusterID": "588abbf6-0f74-11ef-ba10-bc2411f077b2","monitors": ["192.168.0.163:6789","192.168.0.164:6789","192.168.0.165:6789"],"cephFS": {"subVolumeGroup": "myfsg"}}]

metadata:name: ceph-csi-config

EOF

本次使用helm安装

请认真阅读完yaml在安装

# egrep -v "^#|^$" values.yaml

---

rbac:# Specifies whether RBAC resources should be createdcreate: true

serviceAccounts:nodeplugin:# Specifies whether a ServiceAccount should be createdcreate: true# The name of the ServiceAccount to use.# If not set and create is true, a name is generated using the fullnamename:provisioner:# Specifies whether a ServiceAccount should be createdcreate: true# The name of the ServiceAccount to use.# If not set and create is true, a name is generated using the fullnamename:

csiConfig:- clusterID: "588abbf6-0f74-11ef-ba10-bc2411f077b2"monitors:- "192.168.0.163:6789"- "192.168.0.164:6789"- "192.168.0.165:6789"cephFS:subvolumeGroup: "myfsg"#netNamespaceFilePath: "{{ .kubeletDir }}/plugins/{{ .driverName }}/net"

commonLabels: {}

logLevel: 5

sidecarLogLevel: 1

CSIDriver:fsGroupPolicy: "File"seLinuxMount: false

nodeplugin:name: nodeplugin# if you are using ceph-fuse client set this value to OnDeleteupdateStrategy: RollingUpdate# set user created priorityclassName for csi plugin pods. default is# system-node-critical which is highest prioritypriorityClassName: system-node-criticalhttpMetrics:# Metrics only available for cephcsi/cephcsi => 1.2.0# Specifies whether http metrics should be exposedenabled: true# The port of the container to expose the metricscontainerPort: 8081service:# Specifies whether a service should be created for the metricsenabled: true# The port to use for the serviceservicePort: 8080type: ClusterIP# Annotations for the service# Example:# annotations:# prometheus.io/scrape: "true"# prometheus.io/port: "9080"annotations: {}clusterIP: ""## List of IP addresses at which the stats-exporter service is available## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips##externalIPs: []loadBalancerIP: ""loadBalancerSourceRanges: []## Reference to one or more secrets to be used when pulling images##imagePullSecrets: []# - name: "image-pull-secret"profiling:enabled: falseregistrar:image:repository: registry.cn-shenzhen.aliyuncs.com/neway-sz/uattag: registrar-v2.10.1pullPolicy: IfNotPresentresources: {}plugin:image:repository: quay.io/cephcsi/cephcsi#tag: v3.11-canarytag: canarypullPolicy: IfNotPresentresources: {}nodeSelector: {}tolerations: []affinity: {}# Set to true to enable Ceph Kernel clients# on kernel < 4.17 which support quotas# forcecephkernelclient: true# common mount options to apply all mounting# example: kernelmountoptions: "recover_session=clean"kernelmountoptions: ""fusemountoptions: ""

provisioner:name: provisionerreplicaCount: 1strategy:# RollingUpdate strategy replaces old pods with new ones gradually,# without incurring downtime.type: RollingUpdaterollingUpdate:# maxUnavailable is the maximum number of pods that can be# unavailable during the update process.maxUnavailable: 50%# Timeout for waiting for creation or deletion of a volumetimeout: 60s# cluster name to set on the subvolume# clustername: "k8s-cluster-1"# set user created priorityclassName for csi provisioner pods. default is# system-cluster-critical which is less priority than system-node-criticalpriorityClassName: system-cluster-critical# enable hostnetwork for provisioner pod. default is false# useful for deployments where the podNetwork has no access to cephenableHostNetwork: falsehttpMetrics:# Metrics only available for cephcsi/cephcsi => 1.2.0# Specifies whether http metrics should be exposedenabled: true# The port of the container to expose the metricscontainerPort: 8081service:# Specifies whether a service should be created for the metricsenabled: true# The port to use for the serviceservicePort: 8080type: ClusterIP# Annotations for the service# Example:# annotations:# prometheus.io/scrape: "true"# prometheus.io/port: "9080"annotations: {}clusterIP: ""## List of IP addresses at which the stats-exporter service is available## Ref: https://kubernetes.io/docs/user-guide/services/#external-ips##externalIPs: []loadBalancerIP: ""loadBalancerSourceRanges: []## Reference to one or more secrets to be used when pulling images##imagePullSecrets: []# - name: "image-pull-secret"profiling:enabled: falseprovisioner:image:repository: registry.cn-shenzhen.aliyuncs.com/neway-sz/uattag: provisioner-v4.0.1pullPolicy: IfNotPresentresources: {}## For further options, check## https://github.com/kubernetes-csi/external-provisioner#command-line-optionsextraArgs: []# set metadata on volumesetmetadata: trueresizer:name: resizerenabled: trueimage:repository: registry.cn-shenzhen.aliyuncs.com/neway-sz/uattag: resizer-v1.10.1pullPolicy: IfNotPresentresources: {}## For further options, check## https://github.com/kubernetes-csi/external-resizer#recommended-optional-argumentsextraArgs: []snapshotter:image:repository: registry.cn-shenzhen.aliyuncs.com/neway-sz/uattag: snapshotter-v7.0.2pullPolicy: IfNotPresentresources: {}## For further options, check## https://github.com/kubernetes-csi/external-snapshotter#csi-external-snapshotter-sidecar-command-line-optionsextraArgs: []args:# enableVolumeGroupSnapshots enables support for volume group snapshotsenableVolumeGroupSnapshots: falsenodeSelector: {}tolerations: []affinity: {}

selinuxMount: false

storageClass:# Specifies whether the Storage class should be createdcreate: truename: csi-cephfs-sc# Annotations for the storage class# Example:# annotations:# storageclass.kubernetes.io/is-default-class: "true"annotations: {}# String representing a Ceph cluster to provision storage from.# Should be unique across all Ceph clusters in use for provisioning,# cannot be greater than 36 bytes in length, and should remain immutable for# the lifetime of the StorageClass in use.clusterID: 588abbf6-0f74-11ef-ba10-bc2411f077b2# (required) CephFS filesystem name into which the volume shall be created# eg: fsName: myfsfsName: cephfs01# (optional) Ceph pool into which volume data shall be stored# pool: <cephfs-data-pool># For eg:# pool: "replicapool"#pool: "fs_kube_data"# (optional) Comma separated string of Ceph-fuse mount options.# For eg:# fuseMountOptions: debugfuseMountOptions: ""# (optional) Comma separated string of Cephfs kernel mount options.# Check man mount.ceph for mount options. For eg:# kernelMountOptions: readdir_max_bytes=1048576,norbyteskernelMountOptions: ""# (optional) The driver can use either ceph-fuse (fuse) or# ceph kernelclient (kernel).# If omitted, default volume mounter will be used - this is# determined by probing for ceph-fuse and mount.ceph# mounter: kernelmounter: ""# (optional) Prefix to use for naming subvolumes.# If omitted, defaults to "csi-vol-".# volumeNamePrefix: "foo-bar-"volumeNamePrefix: ""# The secrets have to contain user and/or Ceph admin credentials.provisionerSecret: csi-cephfs-secret# If the Namespaces are not specified, the secrets are assumed to# be in the Release namespace.provisionerSecretNamespace: ""controllerExpandSecret: csi-cephfs-secretcontrollerExpandSecretNamespace: ""nodeStageSecret: csi-cephfs-secretnodeStageSecretNamespace: ""reclaimPolicy: DeleteallowVolumeExpansion: truemountOptions:# Mount Options# Example:#mountOptions:- discard

secret:# Specifies whether the secret should be createdcreate: truename: csi-cephfs-secretannotations: {}# Key values correspond to a user name and its key, as defined in the# ceph cluster. User ID should have required access to the 'pool'# specified in the storage classuserID: cephfs01userKey: AQAHRD9mmCOLCBAAb+gJ3WBM/KU/FbZEofGOJg==adminID: adminadminKey: AQASMz9mgVCqNxAABEAu/WYy0gaEcTC5zC60Ug==

cephconf: |[global]auth_cluster_required = cephxauth_service_required = cephxauth_client_required = cephx# ceph-fuse which uses libfuse2 by default has write buffer size of 2KiB# adding 'fuse_big_writes = true' option by default to override this limit# see https://github.com/ceph/ceph-csi/issues/1928#fuse_big_writes = true

extraDeploy: []

provisionerSocketFile: csi-provisioner.sock

pluginSocketFile: csi.sock

kubeletDir: /var/lib/kubelet

driverName: cephfs.csi.ceph.com

configMapName: ceph-csi-config

externallyManagedConfigmap: false <<<<----如果你是外部config文件就改成true

cephConfConfigMapName: ceph-config

最后部署你的csi驱动

helm 安装包点击下载

链接:分享文件:ceph-csi-cephfs-3.11.0.tgz

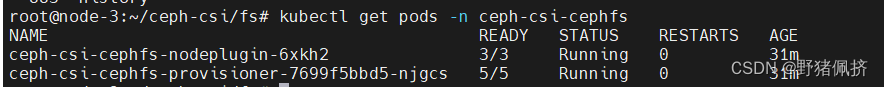

helm install -n ceph-csi-cephfs ceph-csi-cephfs ceph-csi-cephfs-3.11.0.tgz -f values.yaml

编辑一个demon

cat <<EOF > pvc.yaml

apiVersion: v1

kind: PersistentVolumeClaim

metadata:name: csi-cephfs-pvc

spec:accessModes:- ReadWriteManyresources:requests:storage: 1GistorageClassName: csi-cephfs-sc

EOF

不在解释了

# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

csi-cephfs-pvc Bound pvc-e29b9393-9473-4c59-b981-0e24d5835018 1Gi RWX csi-cephfs-sc 31m

这篇关于最新版Ceph( Reef版本)文件存储简单对接k8s(下集)的文章就介绍到这儿,希望我们推荐的文章对编程师们有所帮助!